openIDL Navigation

Date

ZOOM Meeting Information:

Monday, November 21st, 2022 at 9am PT/12pm ET

Join Zoom Meeting

Meeting ID: 790 499 9331

Attendees:

- Sean Bohan (openIDL)

- Mason Wagoner (AAIS)

- Yanko Zhelyazkov (Senofi)

- Peter Antley (AAIS)

- Ken Sayers (AAIS)

- Tsvetan Georgiev (Senofi)

- Faheem Zakaria (Hanover)

- Jeff Braswell (openIDL)

- Nathan Southern (openIDL)

- Ash Naik (AAIS)

- Milind Zodge (Hartford)

- Dhruv Bhatt

Agenda:

- Scheduling:

- No ArchWG Call Mon 11/28

- No TSC Call next Thurs

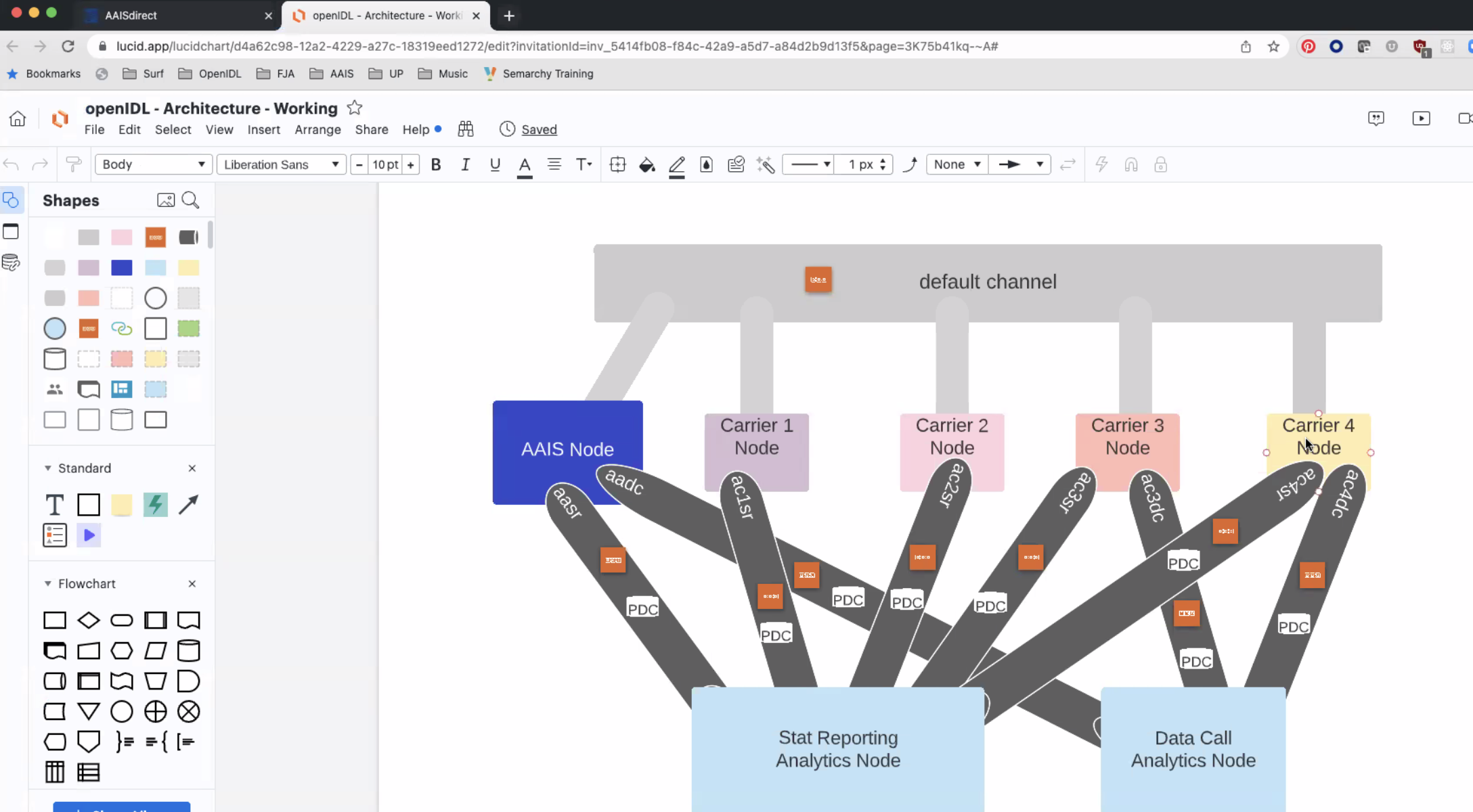

- Recap of architecture diagram

Notes:

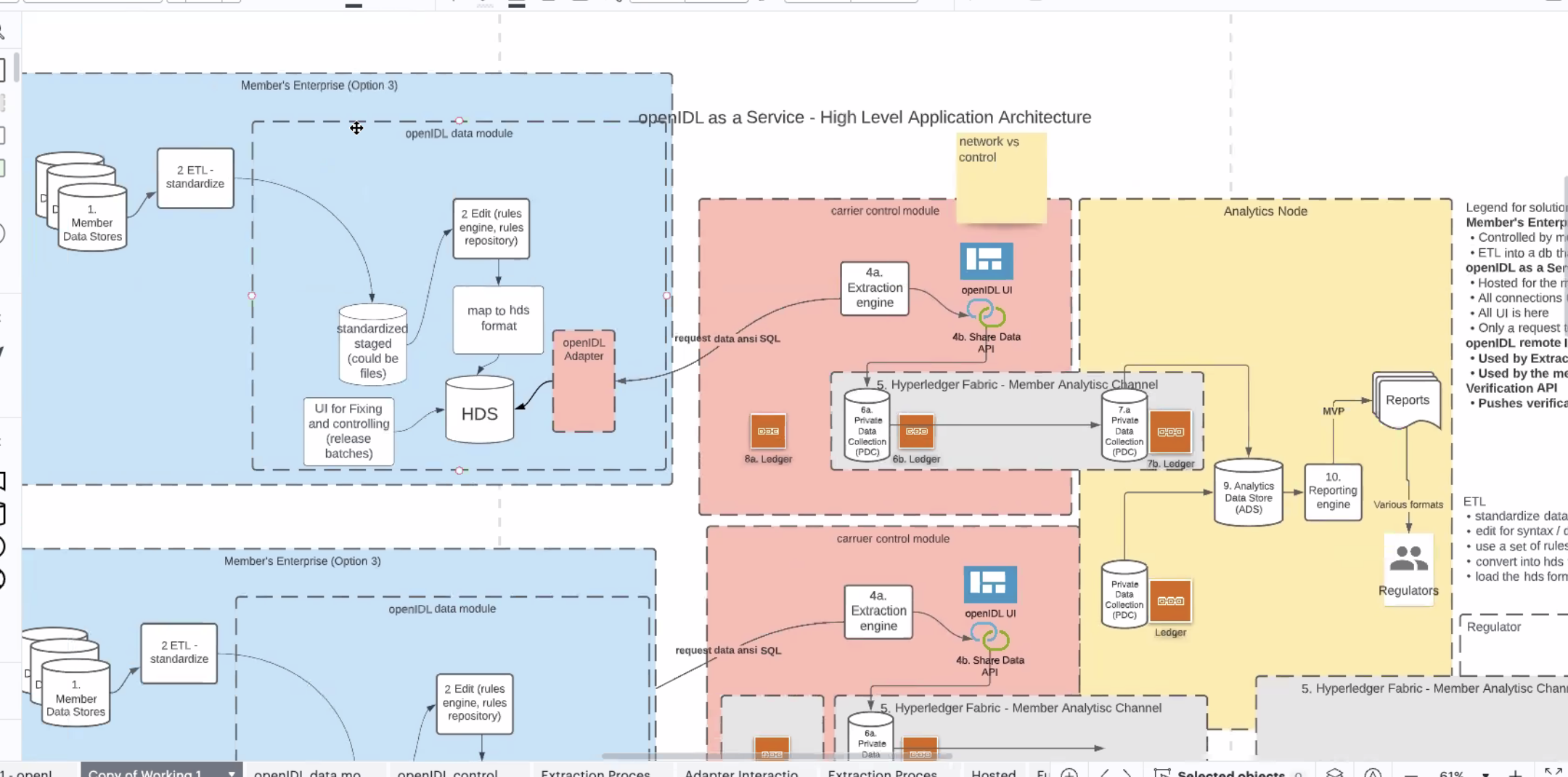

- Last week - high level functionality of different (nodes)

- Vocab - node looks like configurable

- Raw data, uses ETL to create stat plan data currently sent to AAIS/ISO/wherever

- for openIDL process to load into HDS

- process/functionality to load data into HDS is what we call the data modeule

- documenting high level funct in diff modules

- main functions of the data module is to get data into hds

- take data out of HDS, provide to control module

- Control Module - where control of data call happens

- creation thru UI

- likes/consents from UI

- control module instantiated mult times on the network

- one for every org on the network (at least 1 per)

- allows them to engage network as an org, participate in data calls, agree to like/consent to diff reports

- data module inside the enterprise

- control module hosted outside

- works for some and not for other carriers

- carrier/enterprise is hosting the control module and data module

- not making decision right now, just illustrate funct supported by these modules

- Carrier node will have modules that let them engage network functionality

- "Node" = pink and blue box

- data module and control module from openIDL

- Fabric has notion of org or peer node - NOT openIDL node

- chain code user interface request

- Modules - logically separated, what resp are as part of openIDL network

- adapter - where we execute the extract of the data (inside data module right now)

- data module

- gets data into HDS

- extraction of data from HDS

- responding sync or async to requests for data

- control module manages data calls, likes consents

- requests extraction of data

- initiated on control module at the right time

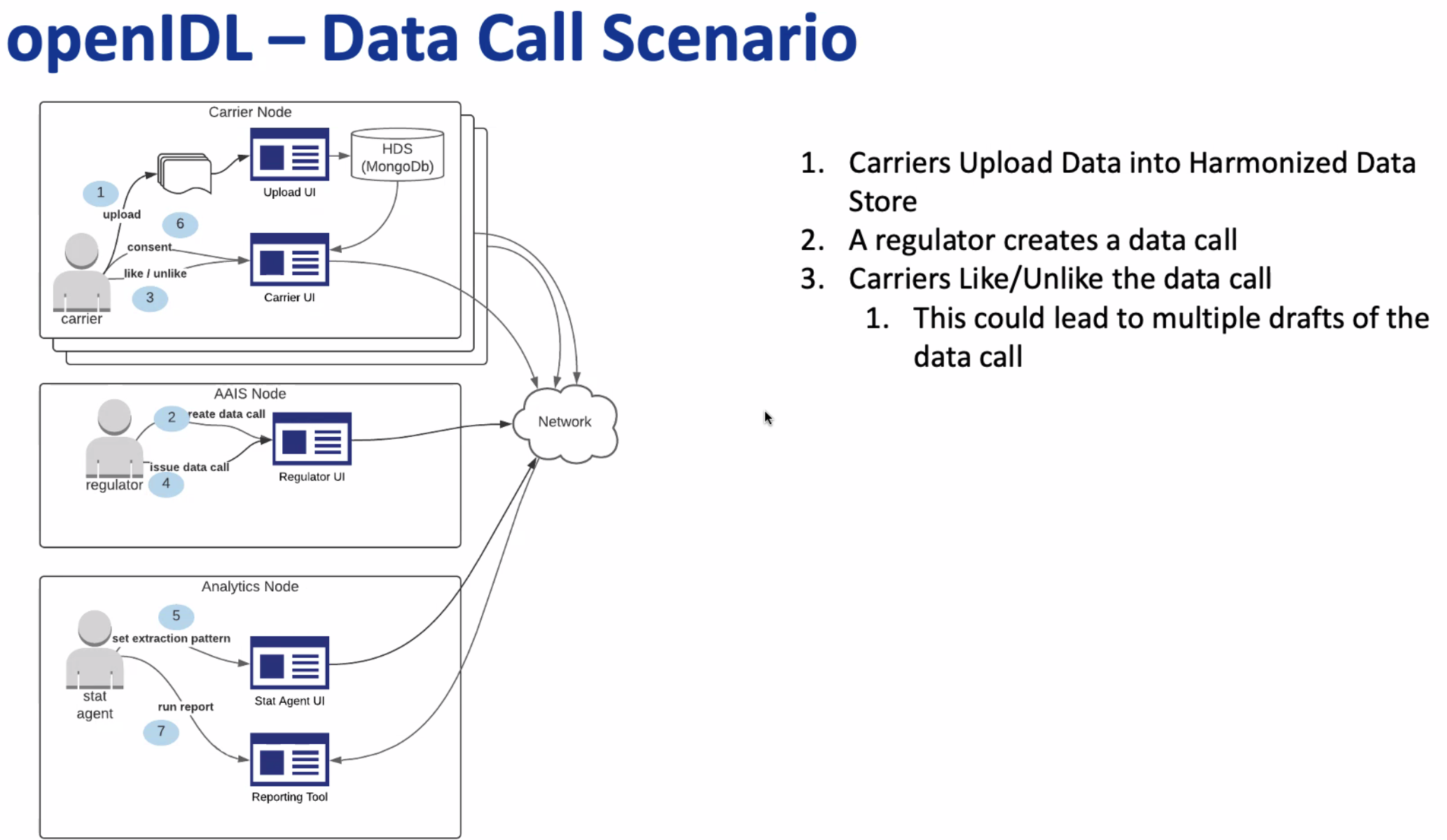

- <Ken Powerpoint Demo for ND>

- outlines flow of data call

- Taking full process and breaking out across all modules

- know the flow and the function of each, break down what is happeing in diff parts

- data call is the business request ("DOI wants to see all uninsured motorists May 2021") - from regualtor req buiness report to be created

- EP is how to get the data out of the HDS (as described by DOI in data call)

- EP - can reuse or use again a data call, possible many to many but logically 1-1

- for data calls generally have 1:1 (diff dates and times)

- same EP, new data call for all intents and purposes

- normally 1 data call and 1 EP, issue another EP create another data call

- assign EP to data call

- EP is map reduce for mongo, could be anything (SQL, whatever)

- EPs may change time to time

- may want to reuse data calls - review EP once and when Data Call comes "I know this"

- governance process? Use, agree, reuse?

- data calls in general - may have things like stat report run every year, agree as long as nothing changes but date

- Classification

- maybe carrier has already seen and agreed at EP level

- make sure doesn't change on the ledger

- currently getting in weeds - EP is stored on ledger, then connected and stored in data call

- needs to go thru review process, once reviewed and approved, can be reused, added to chaincode to support it

- requesting of data now useing priv data collectioin to remove resuts from carrier to analytics node

- control module moving results of EP, carrier control module via adapter grabs results via PDC, where report processor will eventually run

- move data fom result of api call to analytics channel

- Result data to analytics channel PDC

- ecapsulating ledger tech so the knowledge of hor to interact ledger thru control module

- some funct left

- once thru control module, data from carrier node now in analuytics node in pdc on a per carrier basis

- carrier 1 thru 11, now need to trigger report

- Maturity of the data call

- all of data and consents completed before deadline

- end of deadline, chron job executes and looks for processor

- linkage between results of EP and the data call and thats data call ID

- currently consent is trigger for EP, should not happen

- connection between result (un map-reduce for EP, new collection in Mongo, also stored in s3 for logging, calls into chaincode, (CA01 - specific analytics channal

- when consent given,

- data avail to analytics node as soon as consent happens

- we SHOULD hold off until maturity

- "2 phase consent" not addressed yet

- two things there

- raw summation of data across all carriers doesnt make sense

- if you do averaging on carrier node, needs to be re-averages

- minimal processing on analytics node

- dale mentioned "if one of 10 carriers i dont want to be noticed"

- anoymized consent

- degree of flexibility at the beginining

- 2 phase consent is easy to see and understand what it means

- current procedding not hppeing at right time

- do now have a fully functioning report provcressor

- Next ArchWG Call

- Detail the control module and analytics module

| Time | Item | Who | Notes |

|---|---|---|---|

Documentation:

Notes: (Notes taken live in Requirements document)

Recording:

Overview

Content Tools