openIDL Navigation

Date

ZOOM Meeting Information:

Monday, March 6, 2023, at 11:30am PT/2:30pm ET

Join Zoom Meeting

Meeting ID: 790 499 9331

Attendees:

- Mason Wagoner (AAIS)

- David Reale (Travelers)

- Jeff Braswell (openIDL)

Nathan Southern (openIDL) - Yanko Zhelyzakov (Senofi)

- Ken Sayers (AAIS )

- Satish Kasala (Hartford)

- Dale Harris (Travelers)

- Ash Naik (AAIS)

Agenda:

- Update on ND POC

- Update on openIDL Testnet (Jeff Braswell)

- Update on internal Stat Reporting with openIDL (Peter Antley)

- Update on Infrastructure Working Group (Sean Bohan)

- MS Hurricane Zeta POC Architecture Discussion (KenS)

- ETLs (PeterA)

- AOB:

- Future Topics:

Notes:

- ND POC

- Carriers received reports of VINs not registered, chance to see where they gave VINs as insured but not registered in ND

- Started loading Feb Data as of last Weds (5 so far)

- Carriers received reports of VINs not registered, chance to see where they gave VINs as insured but not registered in ND

- opemIDL Testnet

- Test data from 2 carriers, working on new vers of testnet using Fabric Operator

- Code Merge and strategies for single codebase (for POCs and members), working towards testnet and mainnet

- Internal Stat Reporting w/ openIDL

- working on production-izing auto data

- working on the decode process and utilizing db for decoding

- Hurricane Zeta POC

- waiting on carrier for internal analysis (AWS region followup)

- waiting on carrier for internal analysis (AWS region followup)

- GT2

- fun of inherited cloud formation templates

- Data Layer for new ETL plan

- high level of GT2

- Recycle as much code as possible and add features required

- GT2 to be renamed

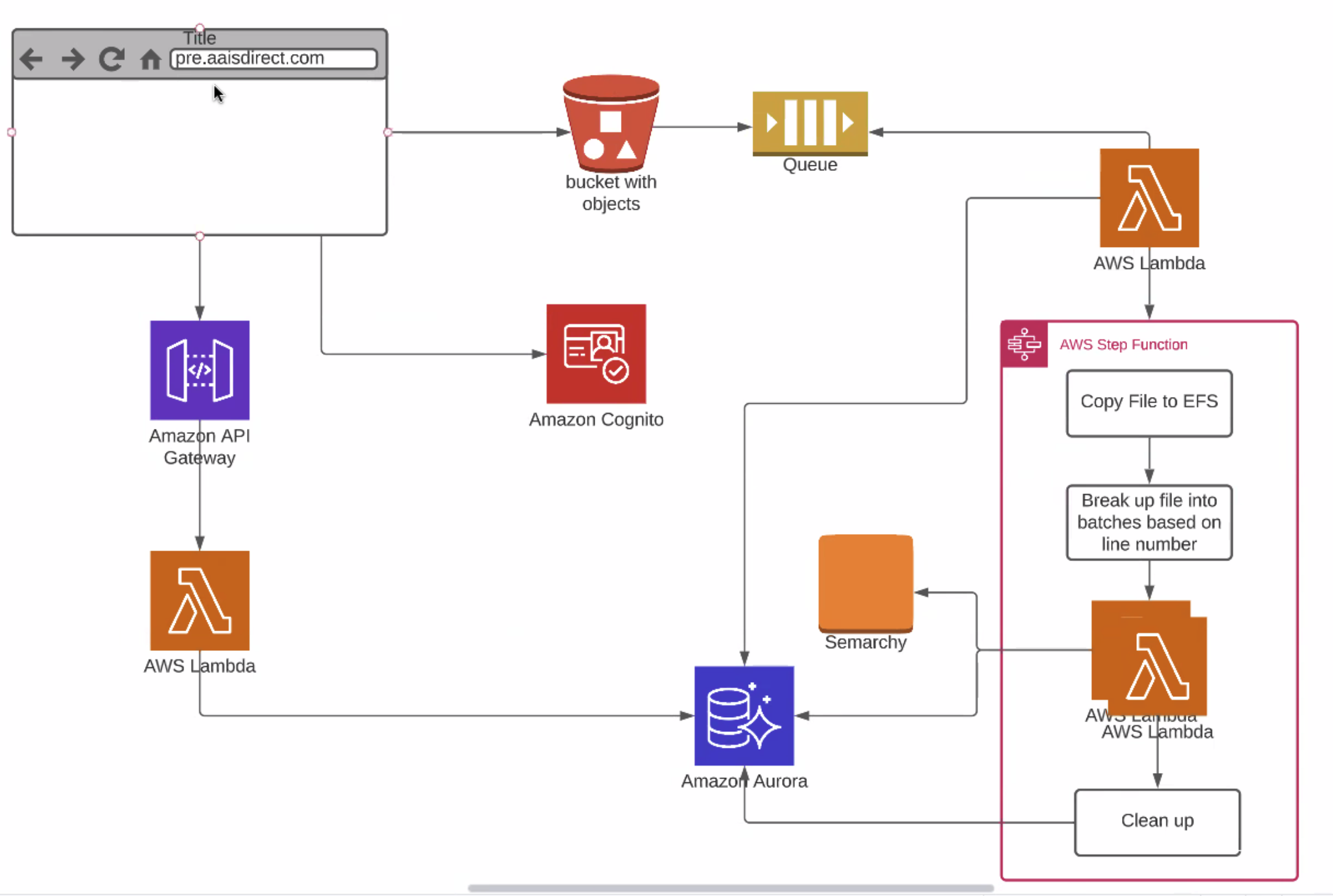

- upload file, COgnito controls auth, app moves text file into bucket, names of files added to queue

- lambda kick off, step funct kicks off, set up EFL (elastic file storage), break up into batches, run edit packages and load to table and any record with eorror write error to error table, returns to user

- users highlight error records against SDMA

- gap in GT2 - does not allow inline editing

- using aurora (not required) but lets them stream more data through, using EFS + Auroroa, high throughput data

- series of lambdas to load to same table

- combo of policy and claim records, sparse, bunch of nulls, wide and sp

- load data into combined table, get errors, make edits, after records edited, approve submission an dmove into HDS

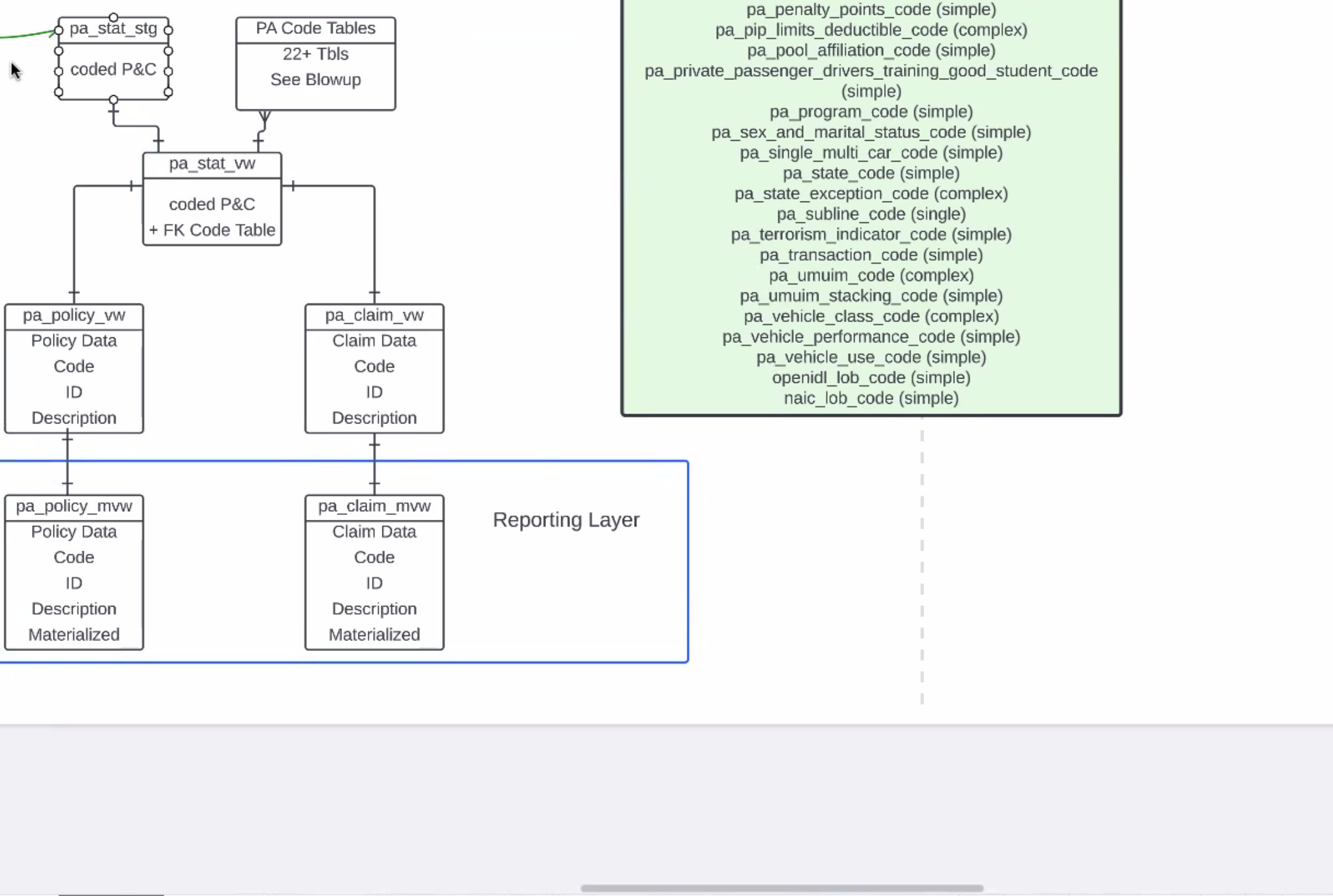

- stg - coded raw stat records, move dinto columns

- personal auto stat view - connects codes that come into code tables

- state code can be shared across all lines

- after foreign keys, breaking stuff apart - policy view and claim view

- descriptions - human readable tables

- rows and codes and joins

- lot of IO

- painful to make those joins over and over

- using materialized views with poloicy and claim view, all policy data, codes, descriptions - ready to materialize

- reporting layer - policy-claim, materialized view each line

- talk in depth on GT2 next week

- based on a ref implementation for ETL - all expected to run in carrier cloud

- all in the HDS

- really discussing these tables, part that matters (minimum) thing that needs to happen - need to be able to write extacts on these tables

- carrier could skip ref

- when we get to the bottom tables, will we have diff sets of codes for states, etc.? thats where standard data model comes into play?

- EPs should expext - not state name but abbreviation, etc.

- AR, ID, etc and primary key for each too

- wide table

- easy to run reports against, still at the same per transactionm level

- each row easy to read for any reporting tool you want to connect to

- coverage codes dont change often

- FlyWay discussion (PR from Joseph for demo)

| Time | Item | Who | Notes |

|---|---|---|---|

Documentation:

Notes: (Notes taken live in Requirements document)

Recording:

Overview

Content Tools